Validating a study design within TrialKit is a necessary and often required step for compliance with industry practices. At the very least, it’s a recommended step to help document how data is being created and stored. TrialKit’s Floyd AI saves significant time by automatically documenting data creation and storage, and what happened during that process.

It all starts with AI Data Generation. When Floyd creates data in a study, it keeps track of the entire process for future reference.

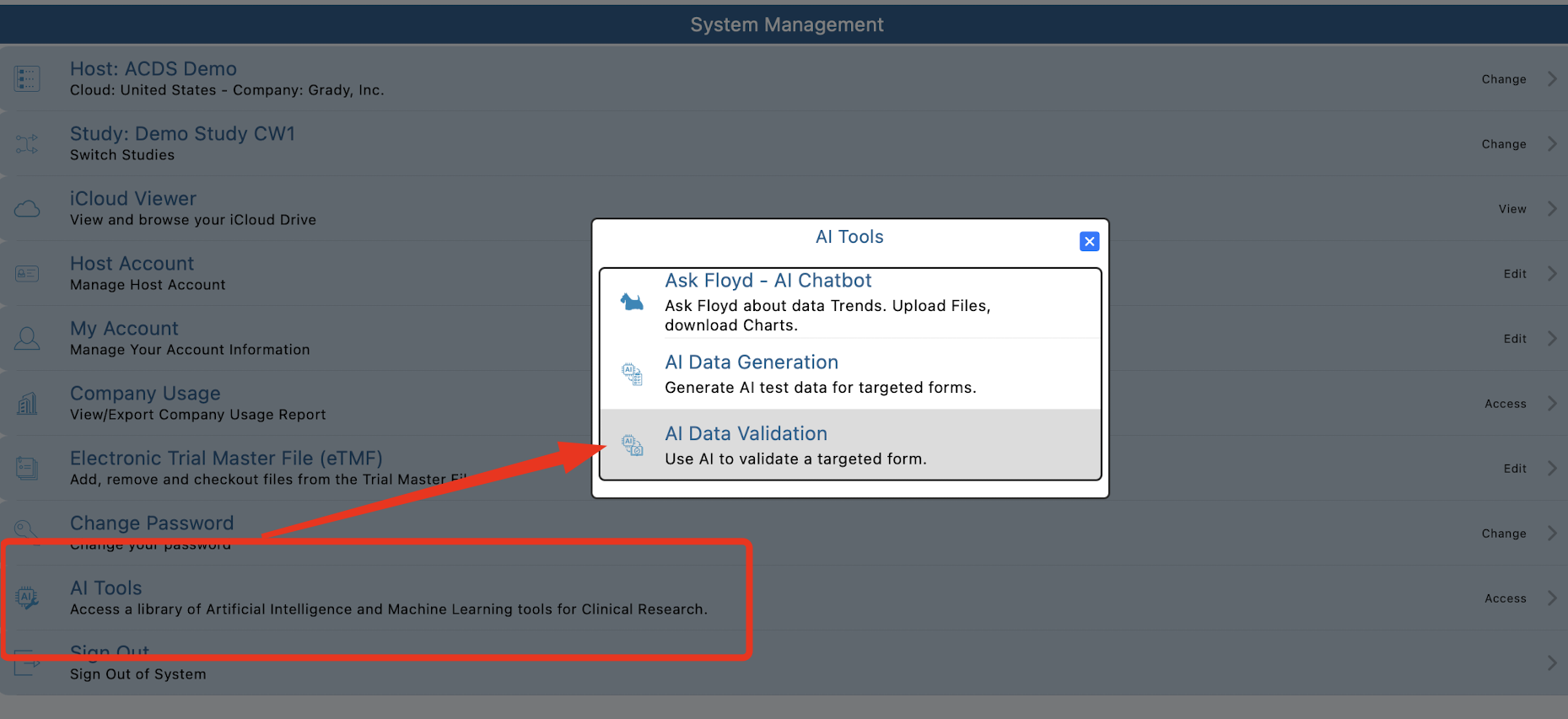

To access validation results, open Data Validation option from the Floyd AI Tools menu. Currently only available from the App:

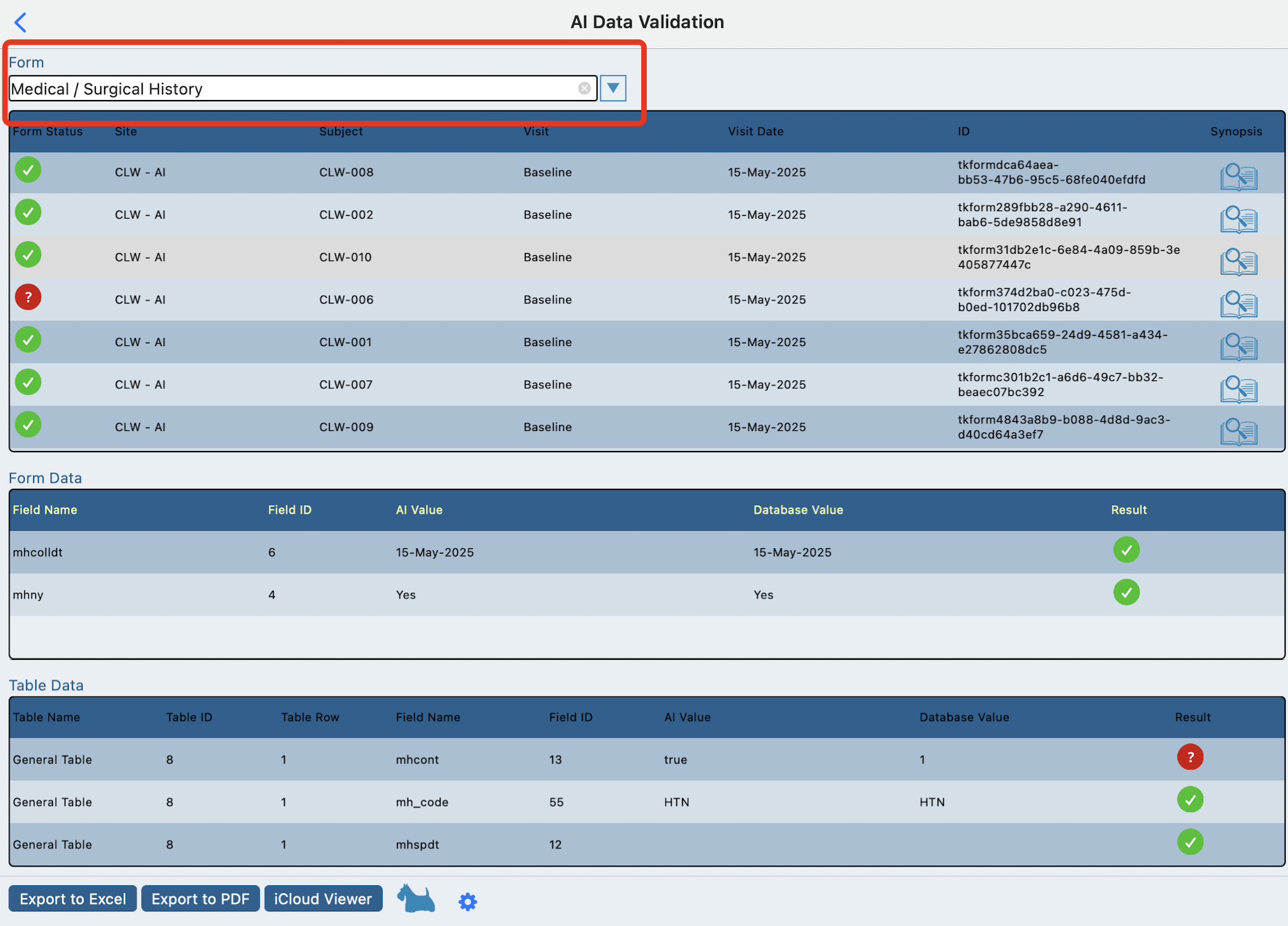

View data generation transaction history

First select a form to view the data results. Tap any subject in the first table.

This will display another table with the fields from the form and the data that AI generated next to the data that TrialKit stored.

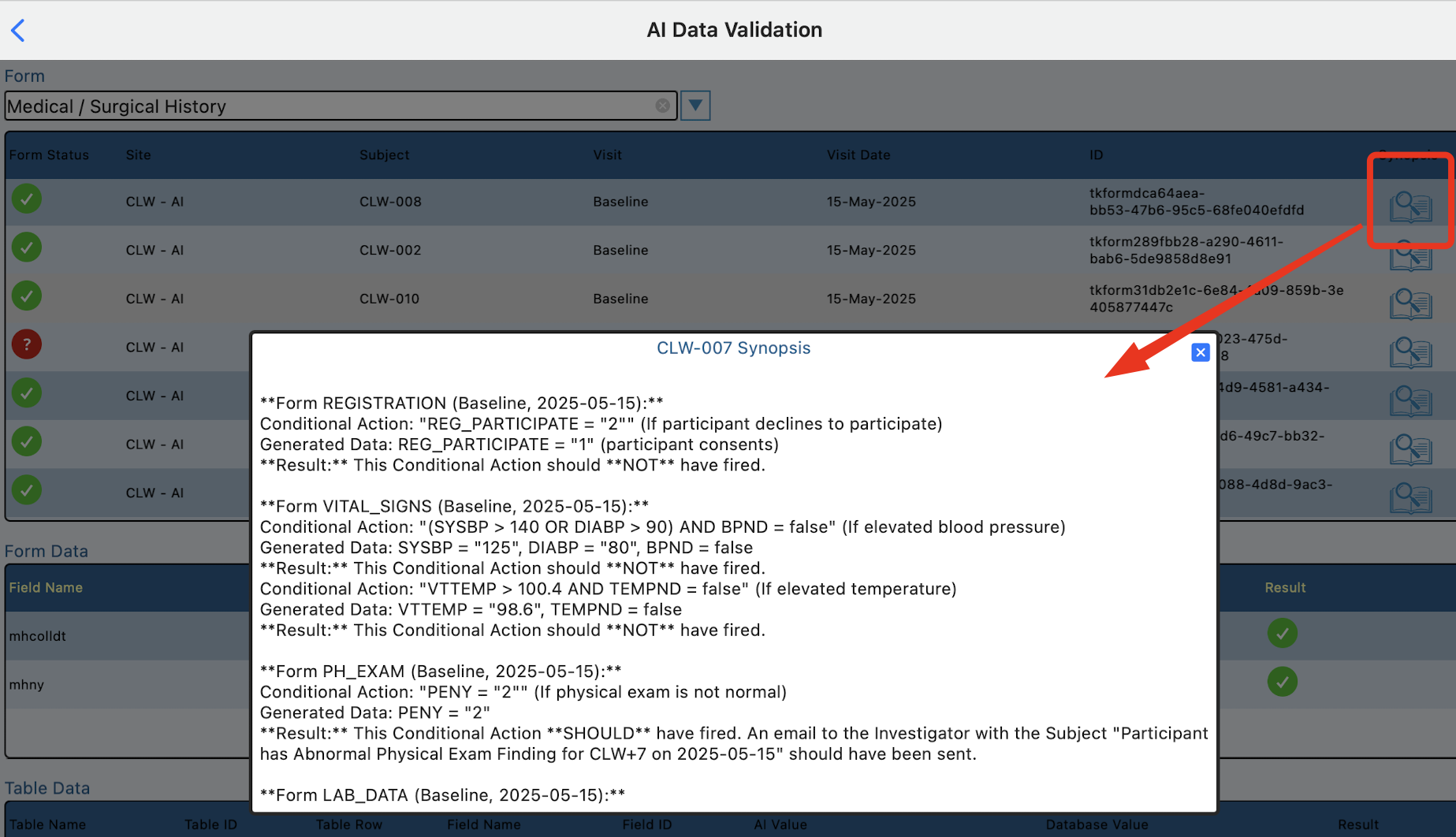

Synopsis of form logic (conditional actions)

When the data is generated and stored, Floyd AI keeps an evaluation log of conditional actions across all forms for each subject, which can be accessed later on by tapping the Synopsis icon in the first table.

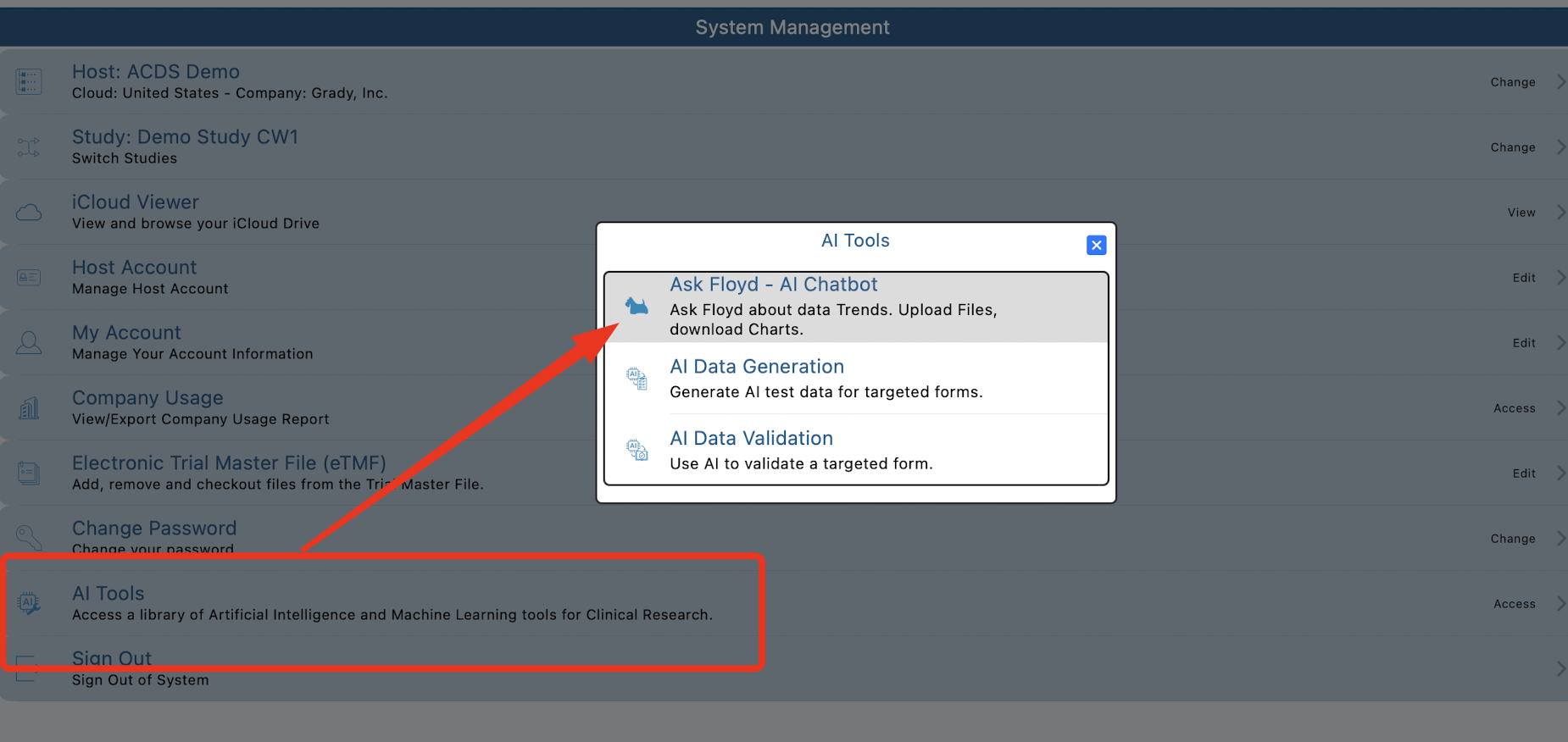

Ask Floyd about generated data

To ask about the data, return to the home screen and open Floyd chatbot.

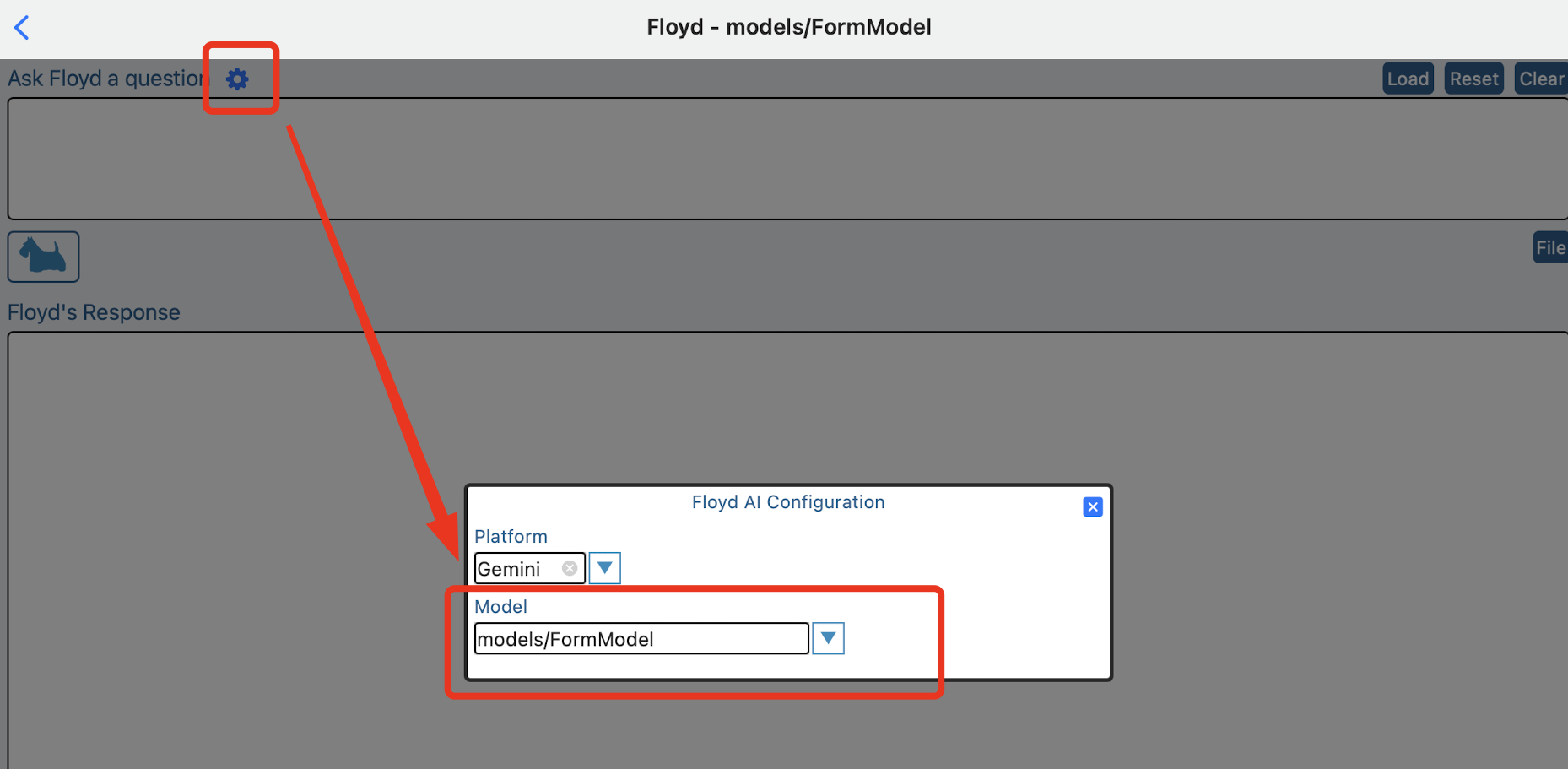

Select the Form Model from the chatbot settings:

In the prompt panel, ask any question about subjects in the AI sites.

Examples of Validation Questions

Unexpected Data

“Explain your reasoning behind the blood pressure for data for subject Test-007”

“I see there is an AE reported prior to procedure visit. Is this probable?”

Recruitment

"What is the current enrollment rate across all sites compared to our projected timeline?"

"Show me the breakdown of participants by ethnicity and age group in the Phase II cohort."

"Which sites have the highest screen-fail rates, and what are the primary reasons for those failures?"

"How many patients have been active in the study for more than 12 months?"

Safety

"Are there any recurring Grade 3 or higher Adverse Events appearing in the 50mg dosage group?"

"List all Serious Adverse Events (SAEs) reported in the last 48 hours."

"Is there a statistically significant correlation between [Drug X] and reports of elevated liver enzymes in patients over 60?"

"How many patients have discontinued the study due to gastrointestinal issues?"

Efficacy

"What is the mean change from baseline in [Primary Endpoint, e.g., HbA1c levels] at Week 12?"

"Compare the efficacy outcomes between the treatment arm and the placebo arm for the smoker subgroup."

"How many participants achieved a 50% reduction in symptoms by the end of the first month?"

Data Quality

"Which sites have the highest number of outstanding data queries or missing forms?"

"Identify any patients whose lab results fall outside the expected physiological range but weren't flagged as AEs."

"Are there any inconsistencies between the medication logs and the reported patient diaries for Site 104?"

Pro-Tip

The real power of an AI model in this space isn't just identifying what happened, but helping you investigate why.

Example: Instead of just asking "How many people dropped out?", try:

"Analyze the profiles of the last 10 patients who withdrew consent. Do they share any common demographic traits or specific side effects?"